I am a Master’s student in Applied Math at Fudan University and an incoming CS Ph.D. student at Yale University, advised by Prof. Rex Ying. My research focuses on the reliability and verification of multimodal AI, aiming to build systems that integrate explicit structures with dynamic reasoning capabilities to ensure safe, verifiable, and logically sound outputs. Currently, I am exploring verifiable multimodal code generation and principled human-in-the-loop (HITL) mechanisms for error-aware autonomous agents. Feel free to reach out if you’d like to learn more about my work, chat, or explore potential collaborations. You can find my publications on my google scholar.

(Note: You might notice some early Medical AI publications on my Google Scholar. These stem from my first-year lab rotation exploring probabilistic modeling and uncertainty estimation. While I maintain close ties with my brilliant former colleagues, my research is now entirely focused on domain-agnostic multimodal systems.)

🔥 News

- 2026.03: Two papers (MacTok and GIFT) accepted to CVPR 2026.

- 2026.02: Joined Microsoft Research Asia (MSRA) as a Research Intern.

- 2026.02: Two papers (ARTDECO and IVQ) accepted to ICLR 2026.

- 2025.09: Two papers (GOOD and OrderMind) accepted to NeurIPS 2025.

- 2025.09: Loong accepted to NeurIPS 2025 Workshop.

- 2025.06: Dark-ISP accepted to ICCV 2025.

- 2025.05: Joined Shanghai AILab as a Research Intern, working with Jie Fu.

- 2025.04: Welcomed two cats into my life: Fellow and Putao 🐱🍇

- 2025.01: MVP accepted to ICLR 2025.

- 2024.12: Joined as a Research Intern, working with Prof. Chenyang Si and Prof. Ziwei Liu.

📝 Selected Publications

My research primarily focus on two directions: Robust Representation in Complex Environments and Safety and Verification in AI Systems.

Robust Representation in Complex Environments

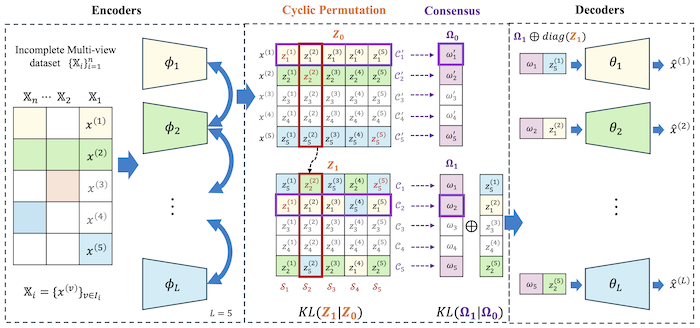

MVP: Deep Incomplete Multi-view Learning via Cyclic Permutation of VAEs

Xin Gao, Jian Pu

- MVP introduces a novel approach to incomplete multi-view representation learning by leveraging latent space correspondences in Variational Auto-Encoders, enabling the inference of missing views and enhancing the consistency of multi-view data even with irregularly missing information.

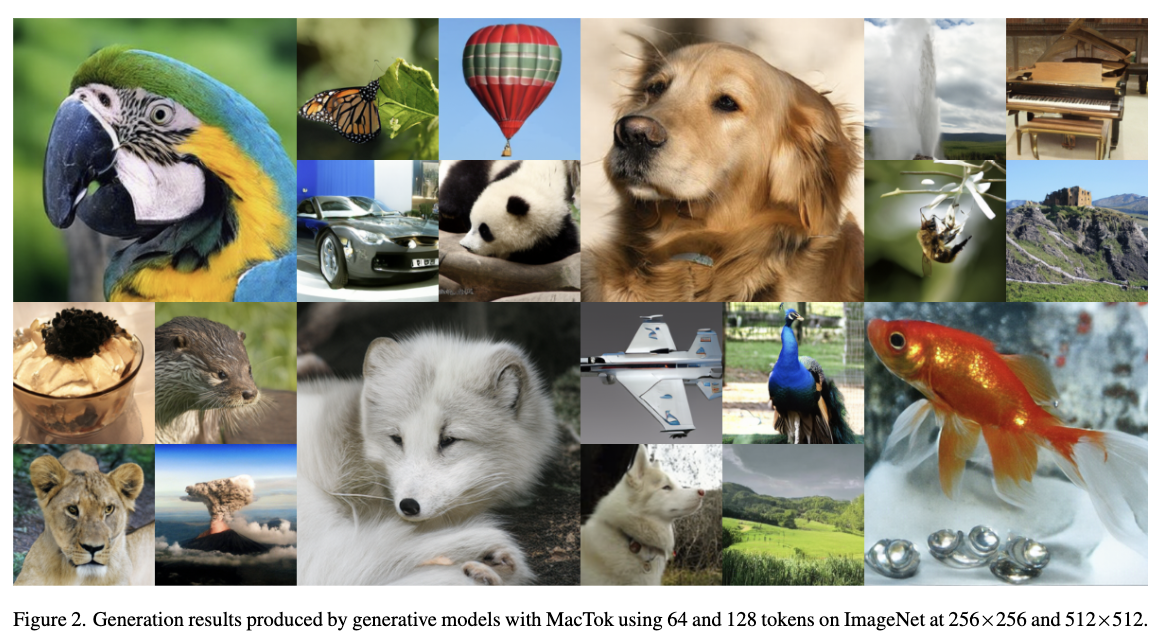

MacTok: Robust Continuous Tokenization for Image Generation

Hengyu Zeng#, Xin Gao#, Guanghao Li, Yuxiang Yan, Jiaoyang Ruan, Junpeng Ma, Haoyu Albert Wang, Jian Pu

- MacTok identifies and mitigates the severe “posterior collapse” failure in continuous image tokenizers. We formulate tokenization as a rigorous information preservation task by introducing DINO-guided semantic masking and multi-level representation alignment, forcing the model to infer robust semantics from incomplete visual evidence. This prevents structural degradation and yields state-of-the-art generation fidelity (gFID 1.52 at 512x512) with up to 64x fewer tokens.

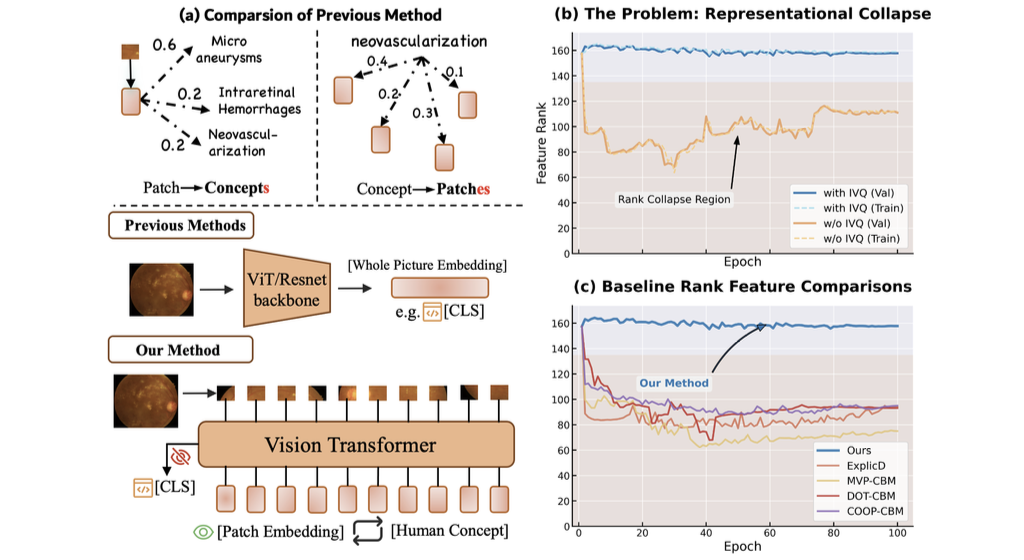

Escaping Low-Rank Traps: Interpretable Visual Concept Learning via Implicit Vector Quantization

Shujian Gao, Yuan Wang, Chenglong Ma, Xin Gao, Jiangtao Yan, Junzhi Ning, Cheng Tang, Changkai Ji, Huihui Xu, Wei Li, Ziyan Huang et al.

- IVQ addresses representational collapse in Concept Bottleneck Models (CBMs), where vision-concept alignment degrades under low-rank feature traps. Rather than imposing a hard, lossy bottleneck, IVQ acts as a structural regularizer that anchors patch features to learned semantic prototypes without quantizing the forward pass, preserving high-rank and structured representations for robust, interpretable concept learning.

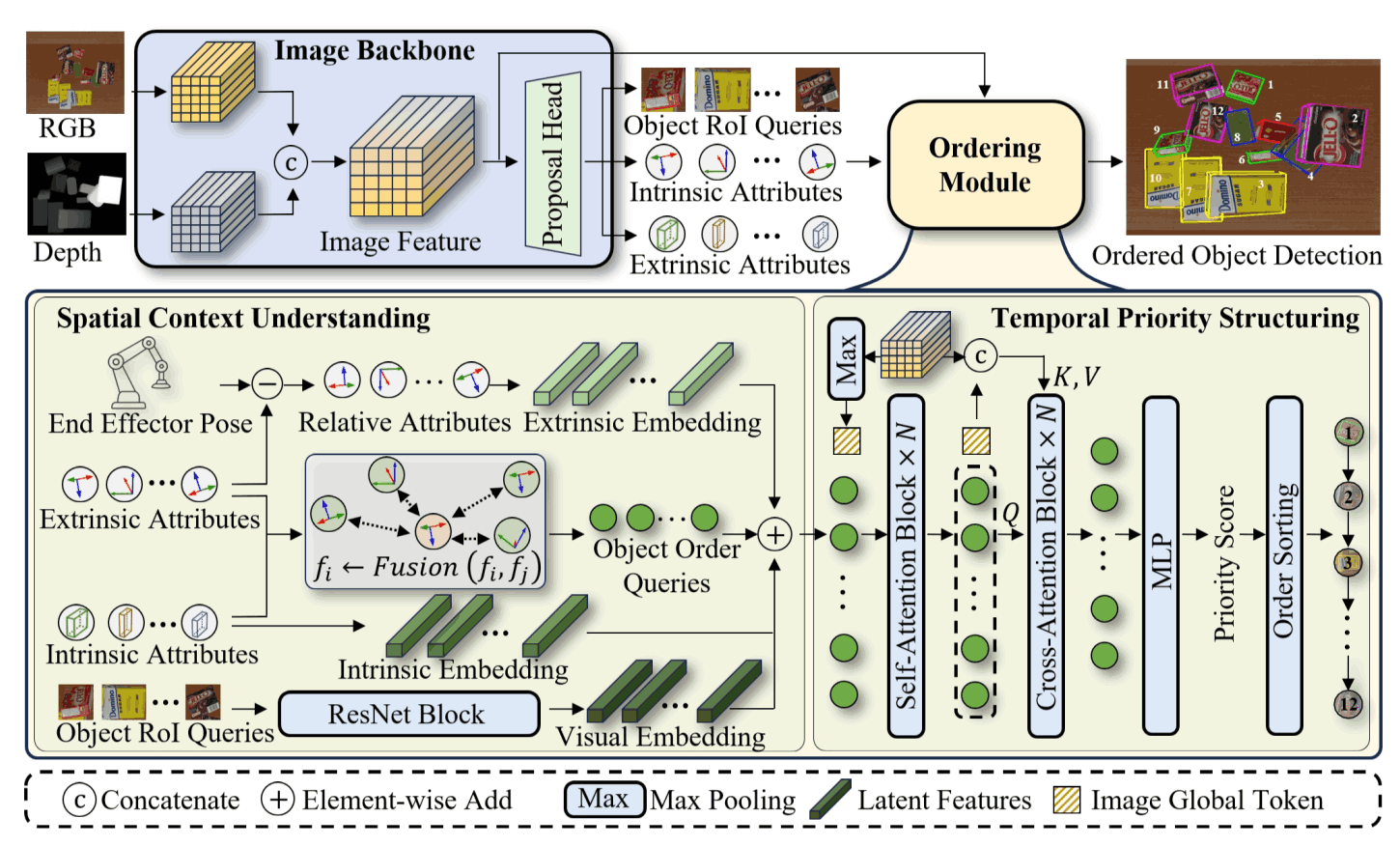

Learning Spatial-Aware Manipulation Ordering

Yuxiang Yan, Zhiyuan Zhou, Xin Gao, Guanghao Li, Shenglin Li, Jiaqi Chen, Qunyan Pu, Jian Pu

- This paper introduces OrderMind, a spatial-aware manipulation ordering framework that learns object priorities from local geometry via a kNN spatial graph and a lightweight temporal module, supervised by VLM-distilled spatial priors. It also presents the Manipulation Ordering Benchmark (163k+ samples) for cluttered scenes.

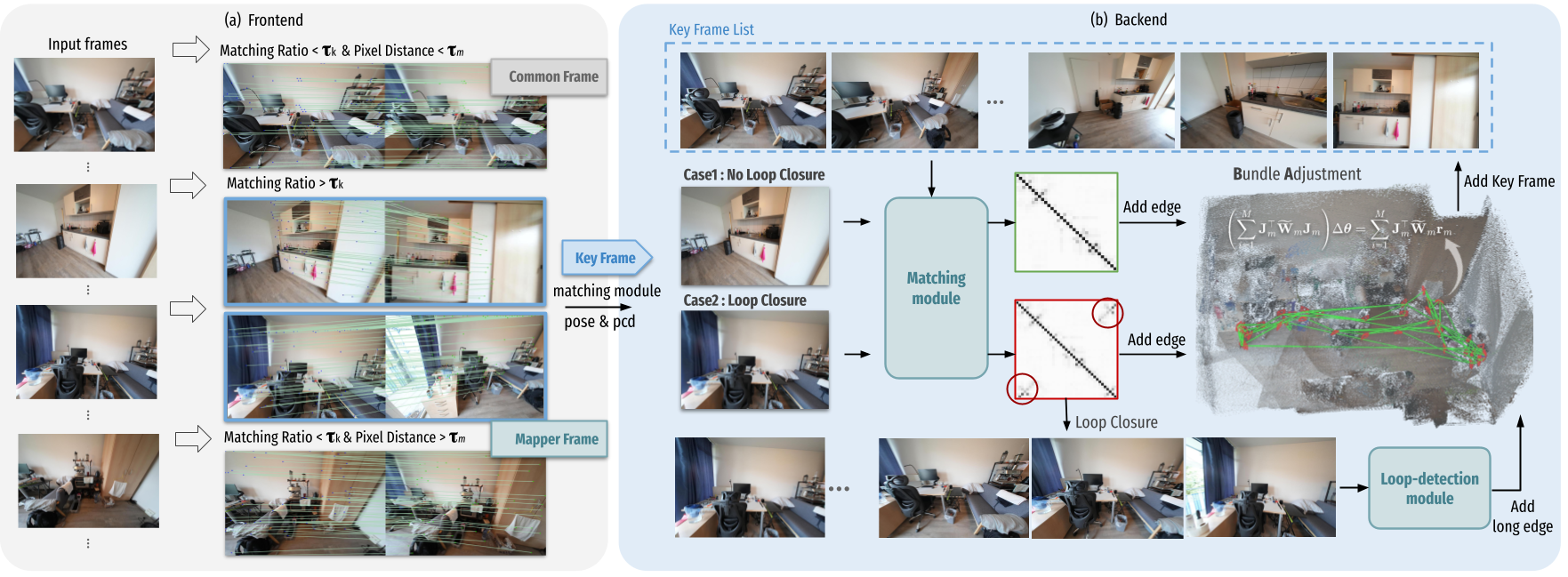

Guanghao Li, Kerui Ren, Linning Xu, Zhewen Zheng, Changjian Jiang, Xin Gao, Bo Dai, Jian Pu, Mulin Yu, Jiangmiao Pang

- ARTDECO unifies feed-forward 3D foundation priors with SLAM-style global optimization, and introduces a hierarchical 3D Gaussian scene representation with LoD-aware rendering to achieve efficient, robust, and high-fidelity on-the-fly monocular 3D reconstruction.

Safety and Verification in AI Systems

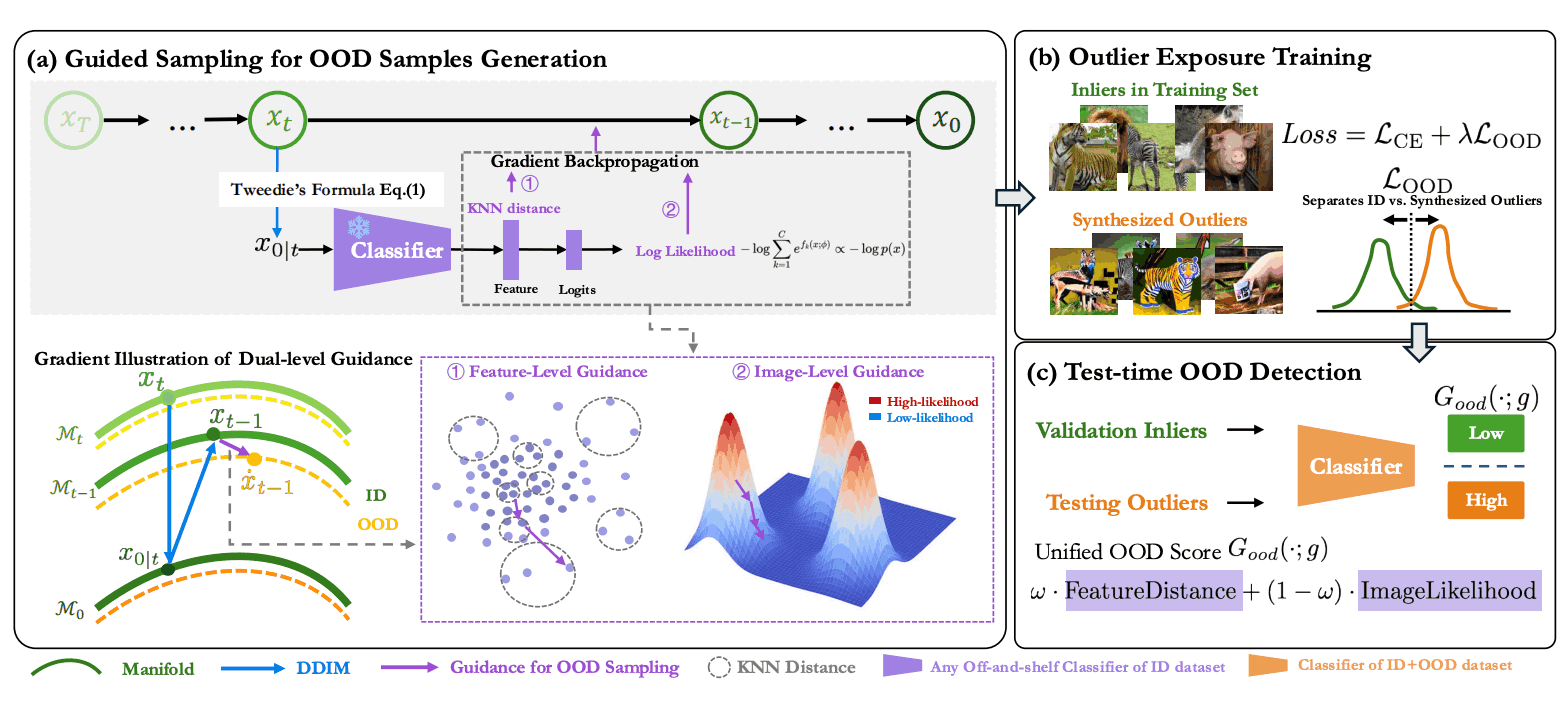

GOOD: Training-Free Guided Diffusion Sampling for Out-of-Distribution Detection

Xin Gao, Jiyao Liu, Guanghao Li, Yueming Lyu, Jianxiong Gao, Weichen Yu, Ningsheng Xu, Liang Wang, Caifeng Shan, Ziwei Liu, Chenyang Si

- GOOD is a training-free diffusion guidance framework that shapes a robust OOD/ID decision boundary. It steers sampling with two gradients—image-level toward low-density regions and feature-level toward sparse zones—to generate diverse, controllable OOD examples.

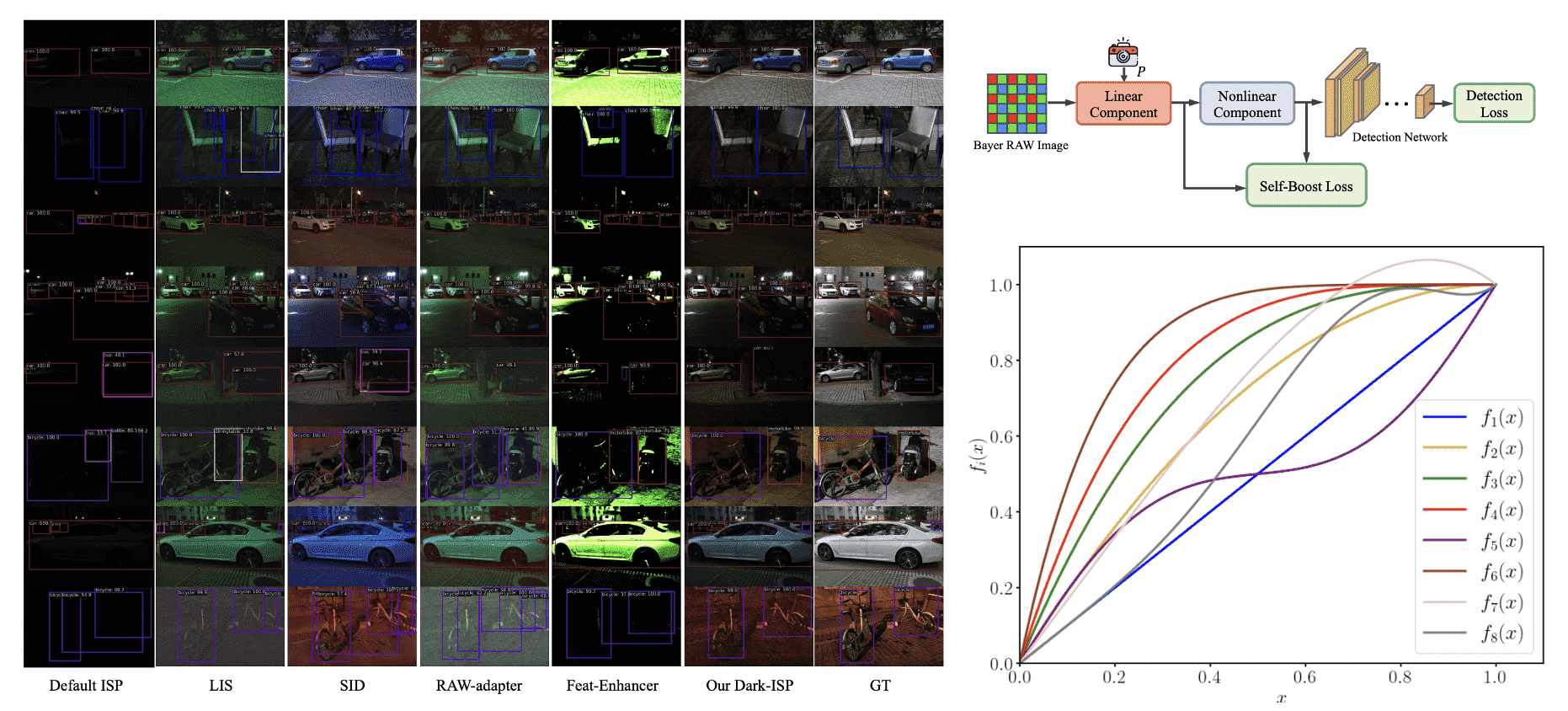

Dark-ISP: Enhancing RAW Image Processing for Low-Light Object Detection

Jiasheng Guo#, Xin Gao#, Yuxiang Yan, Guanghao Li, Jian Pu

- Dark-ISP is a lightweight, self-adaptive ISP plugin that enhances low-light object detection by processing Bayer RAW images. It breaks down traditional ISP pipelines into optimized linear and nonlinear sub-modules, using physics-informed priors for automatic RAW-to-RGB conversion.

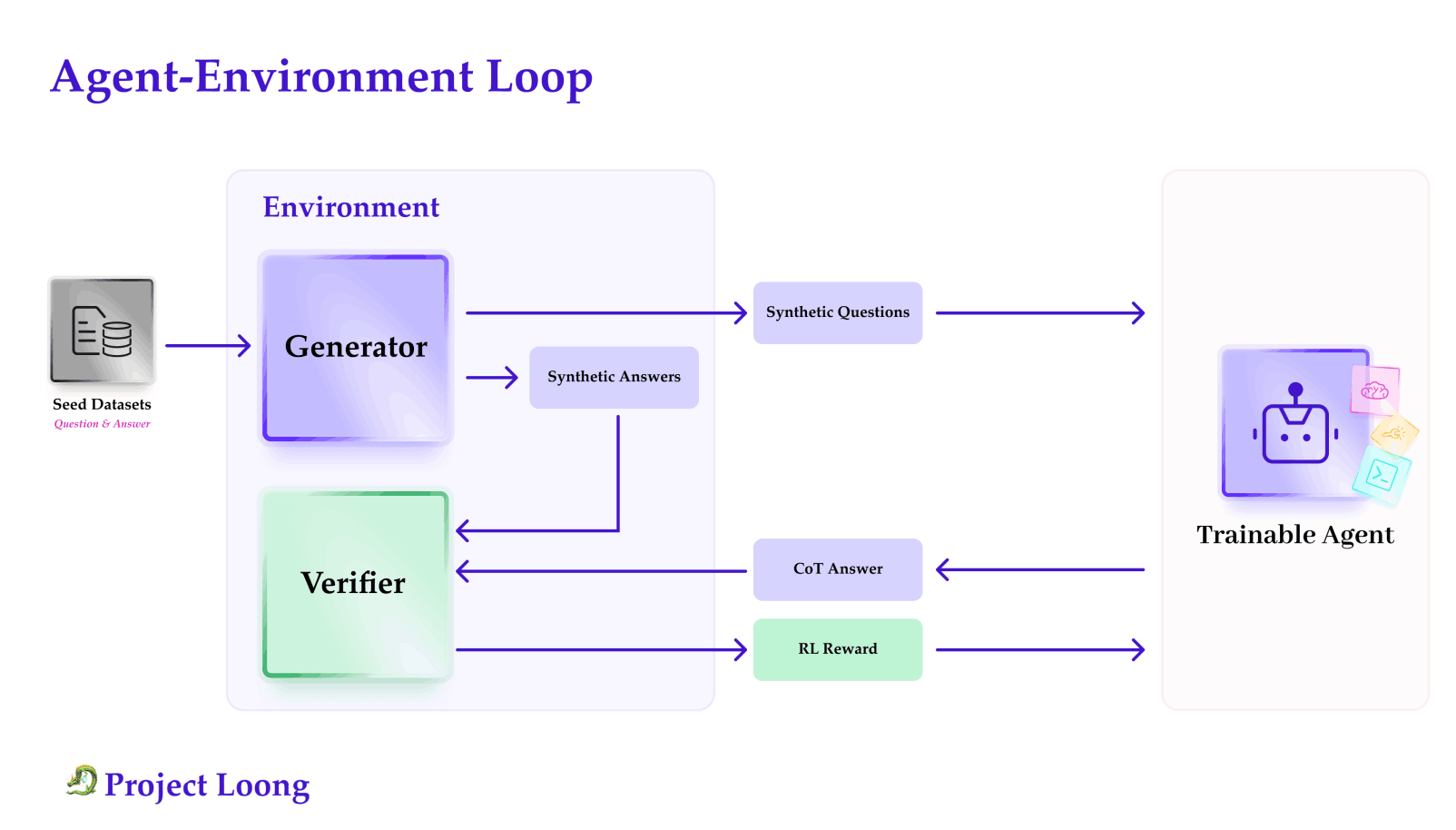

Loong: Synthesize Long Chain-of-Thoughts at Scale through Verifiers

Xingyue Huang, Rishabh, Gregor Franke, Ziyi Yang, Jiamu Bai, Weijie Bai, Jinhe Bi, Zifeng Ding, Yiqun Duan, Chengyu Fan, Wendong Fan, Xin Gao et al.

- Loong is an open-source framework for scalable, verifiable reasoning data. It pairs LoongBench (8,729 human-vetted, code-checkable examples across 12 domains) with LoongEnv, a modular generator forming an agent–environment loop for RL with verifiable rewards and broad-domain CoT training.

🎖 Honors and Awards

- 2023.09 Fudan University Zhicheng Freshman Second Prize Scholarship (Top 5%)

- 2023.06 Outstanding Graduate of Shanghai

- 2022.11 Second Prize in the Chinese Mathematics Competitions (Category A)

- 2021.12 National Scholarship, China

- 2021.09 National Second Prize in the China Undergraduate Mathematical Contest in Modeling

- 2020.12 Shanghai Scholarship

👨💼 Academic Service

- Conference Reviewer: Neurips 2025, ICCV 2025, ICLR 2026, ICML 2026

📖 Educations

- 2023.09 - 2026.06 (now), Master of Applied Mathematics, Fudan University, Shanghai, China.

- 2019.09 - 2023.06, Bachelor of Mathematics, Donghua Univeristy, Shanghai, China.

💻 Internships

- 2026.02 - present, Microsoft Research Asia, Beijing, China.

- 2025.05 - 2025.12, Shanghai AIlab, Shanghai, China.

📚 Learning Materials

😁 If you want the following material without watermarks, please contact me using the email address and specify your intended use.

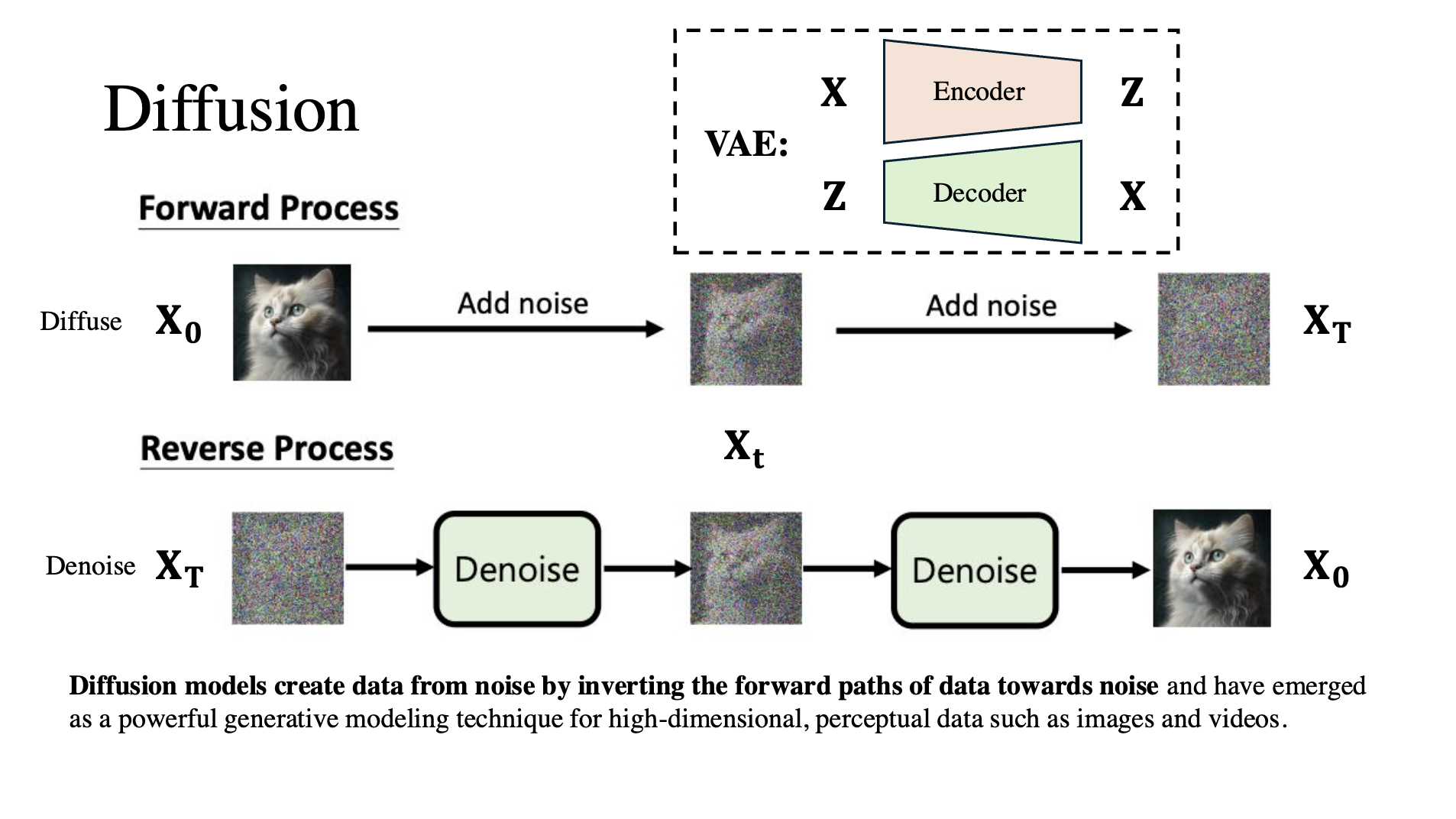

Material 1: Frontiers in Diffusion Model Technologies (1)

This document provides an overview of key concepts related to diffusion models, particularly focusing on the theoretical foundations, development timeline, and recent advancements in the field. The content includes detailed discussions on VAE, DDPM, DDIM, SDE, and ODE, as well as conditional guidance. It also covers the evolution of stable diffusion, including topics like Latent Diffusion, VQ-VAE, and DiT. Lastly, the document highlights the latest methodology, IC-Light, set to be presented at ICLR 2025.

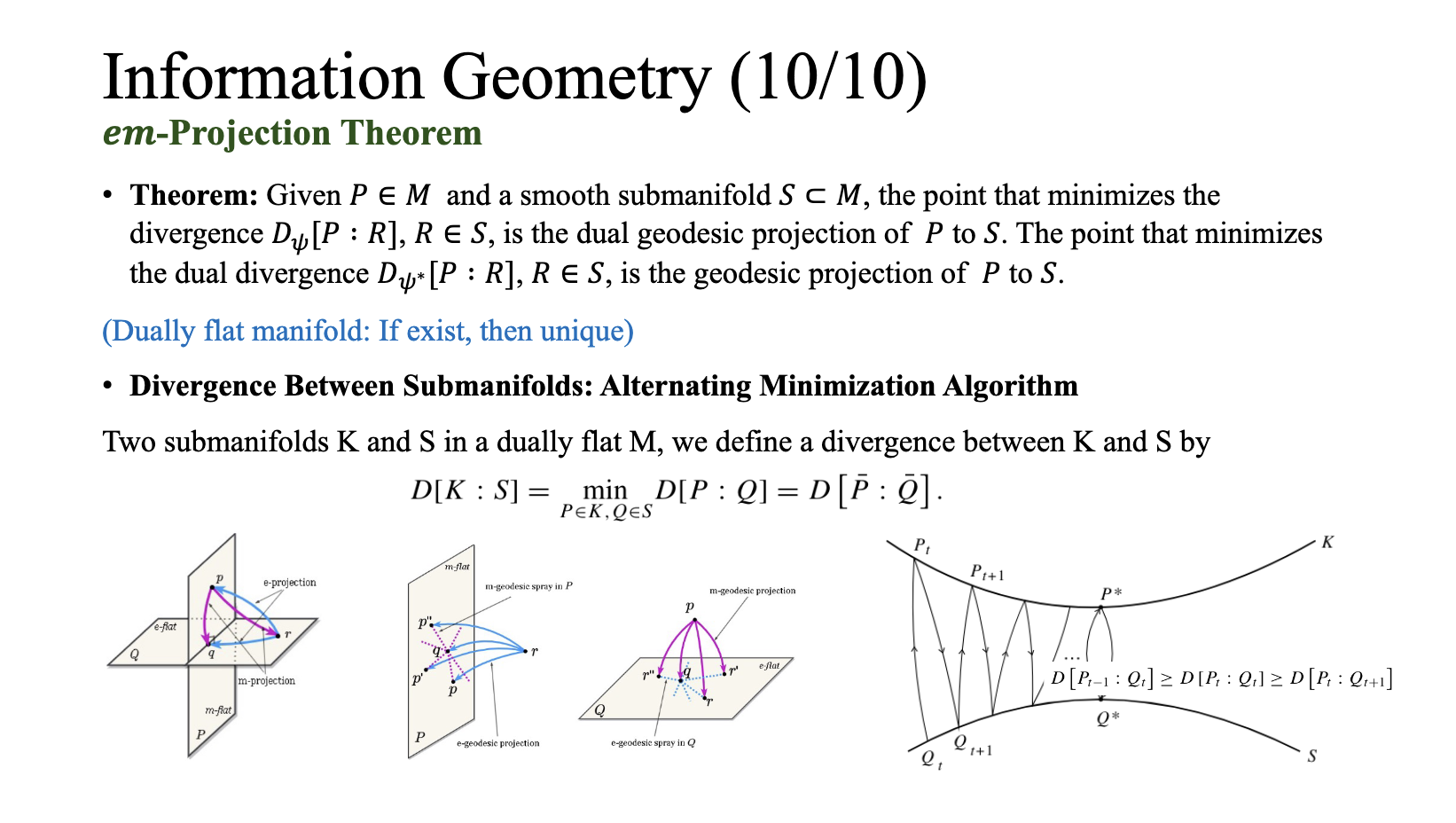

Material 2: Tutorial of Information Geometry and t3-VAE

This document introduces the t3-Variational Autoencoder (ICLR 2024), which uses Student’s t-distributions to model heavy-tailed data distributions and improve latent variable representations. It also explores the framework of Information Geometry, focusing on how generative models can be understood through statistical manifolds, divergences, and Riemannian metrics, providing a deeper understanding of probability distributions and their applications in machine learning, signal processing, and neuroscience.

Material 3: EM Algorithm and X-metric

This document introduces the X-metric framework (PAMI 2023), an N-dimensional information-theoretic approach designed for groupwise registration and deep combined computing, with applications in advanced machine learning tasks. It also covers the theoretical foundations, including entropy, mutual information, and the MLE algorithm, alongside the framework’s modifications for deep computing and network training.